I’m not going to sugarcoat it: Google’s AI efforts are something I’ve always had a complicated relationship with. Their journey has been interesting, to say the least. They began with whatever Bard was, which eventually led to the rebrand to Gemini, and I still think their main consumer-facing AI products are still finding their footing. ChatGPT is still the default the average person reaches for when they think of AI, Claude has built its own loyal following among more technical people (and well, everyone now), and Gemini is just…there.

However, something I’ve always stood by is that Google’s best AI work isn’t happening directly within Gemini. It’s all happening in Google Labs, and while the tools you’ll find there are powered by Gemini under the hood, they feel like entirely different products. They’re focused and built to solve a specific problem instead of trying to be everything for everyone. Opal is a tool Google began testing in Labs in mid-2025, and with all the vibe-coding noise happening, I decided to give it a look once again. No surprise here, but it’s the most impressive no-code app builder I’ve tested, and it’s completely free to use.

Want to stay in the loop with the latest in AI? The XDA AI Insider newsletter drops weekly with deep dives, tool recommendations, and hands-on coverage you won’t find anywhere else on the site. Subscribe by modifying your newsletter preferences!

The “anyone can build an app” promise, finally delivered

If you aren’t familiar with Google Labs, it’s basically the company’s experimental playground — a space where Google’s teams ship early-stage AI tools and let real users put them through their paces before they ever become full products. Some graduate into standalone tools, like NotebookLM did. Others stay experiments. However, all of them follow a solve-one-problem-really-well philosophy that makes Google Labs stuff feel so different from mainline Google products. Illuminate, Learn About, Antigravity, Stitch, Pomelli — they’re all examples of that same energy. Each one picks a specific use case and nails it in a way that a general-purpose chatbot never could.

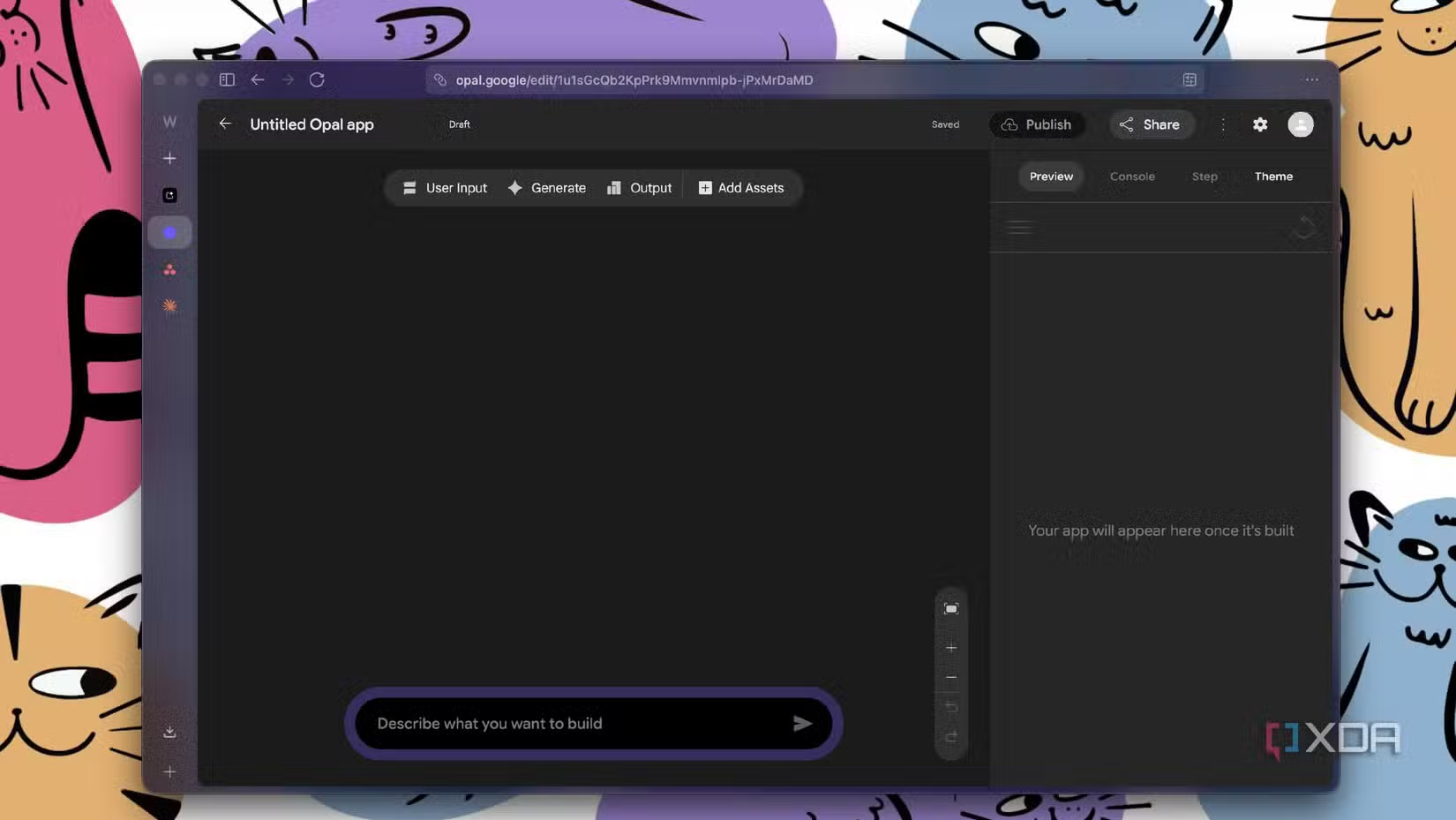

Opal is an experimental tool Google announced on July 24, 2025, and it’s designed to help you build “mini-apps” that are powered by AI. On the surface, it sounds like every other vibe-coding tool: describe what you want in plain English, get a working app. But Opal isn’t doing what Replit or Lovable do. Those tools generate code and present it to you. Opal doesn’t generate code at all. It chains together AI model calls, tools, and prompts into a visual workflow. Each app is a series of connected nodes: one that collects user input, one that calls Gemini, one that searches the web, one that generates an image with Imagen, one that formats the output as a styled webpage.

It feels a lot like n8n or Make.com if you’ve ever used those — that same node-based, visual workflow energy (except you don’t have to manually wire anything). You describe what you want, and Opal builds the entire flow for you. And if you want to tweak something, you can either tell it in plain English or jump into the visual editor and drag things around yourself.

If you read any of their announcements, you’ll notice the word “code” barely comes up. When it does, it’s only to say that you won’t see any when interacting with Opal. The entire framing is around workflows, prompts, models, and tools. That’s a very different game from tools like Replit and Lovable. For instance, when you’re testing something Lovable created, and you spot a bug, Lovable will fix it, but will also give you a very technical breakdown of what went wrong. You’ll spot it referencing file names, component trees, state management issues. That’s useful if you’re a developer, but if you’re not, it’s noise. Opal doesn’t have this problem. If something isn’t working, you click the step that’s off, read the prompt, and adjust it. Even when Google rolled out advanced debugging in their October 2025 update, they made a point of saying they “fundamentally improved the debugging program but intentionally kept it no-code.” You can run your workflow step-by-step, and errors show up in real time, localized to the exact step where the failure occurred.

The tool also uses Gemini’s complete model suite under the hood, and you can manually switch between them for any step if you want to. By default, Opal seems to set each node to “Agent,” which lets it pick the best model for the job automatically.

But if you click into any step, you get a dropdown with the full lineup: Gemini 3 Flash for everyday tasks, Gemini 3.1 Pro for complex reasoning, Nano Banana and Nano Banana Pro for image generation, AudioLM for speech, Veo for video, Lyria 2 for instrumental music.

Think mini, build mighty

A lot of vibe-coding tools are positioned around letting you create production-ready applications with databases, authentication, payment integration, and all the infrastructure that comes with building real software. Opal isn’t trying to be that. It’s designed to help you create focused AI tools that do one thing really well. That’s what the “mini” in “mini-apps” really means.

For instance, if you’re a student in 2026, you’ve likely asked ChatGPT or any AI tool to explain a concept to you like you’re five. Every time you want to do it, you create a new thread, type out the same kind of prompt, maybe tweak the wording, wait for the response, and repeat. With Opal, you could build a dedicated tool for that in seconds! That’s exactly what I did. I sent the prompt below to Opal, and within seconds (I’m not exaggerating, it’s crazy fast), I had a fully working app.

I want a study app where the user pastes a complex topic or concept, and the app explains it at three levels – first like they’re 5 years old, then like they’re in high school, then the full academic version with proper terminology.

I then tried it on a concept that was recently covered in a programming course I’m taking this semester, and each explanation was genuinely impressive. It even included snippets of code throughout the explanation, which really helped. The UI was also very impressive for something that was generated extremely quickly, and that’s something I keep coming back to with Opal. These apps don’t look like rough prototypes or placeholder interfaces. They come out clean, well-structured, and honestly ready to share as-is.

Each step in the app is clearly laid out as its own block in the workflow. For the example above, Opal automatically created three nodes: one for collecting the topic from the user, one for generating the three explanations using a Gemini agent, and one for rendering everything as a styled webpage with auto-layout. I didn’t even tell it which model to use for each step or how to structure the output page! Opal figured that out on its own. It even wrote detailed prompts for each node, like instructing the output step to create a “Knowledge Journey” webpage that “guides the user from simplicity to complexity,” with card-like containers for each explanation level. All of that from a single prompt I typed in about thirty seconds. For instance, here’s the prompt Opal automatically wrote for the output step of the tool:

There’s an option that lets you tweak the prompt, but the fact that all of this happens within seconds and that, too, from a very basic, one-line description is what is impressive.

You can generate a fully shareable link for your app right from the editor, and anyone with a Google account can use it immediately. For instance, you can use this link to view the mini-app. I just used an example in this article!

It’s completely free to use

The best part is that Opal is currently completely free to use. Since it is a Google Labs experiment, there is no pricing tier, no credit system, or no usage caps you need to worry about. You just build! So, if you had any reservations about trying it out, there’s genuinely nothing to lose.